This post is inspired by one of my all-time favourite blogs, AI Weirdness, whose author Janelle Shane uses machine learning to develop new and strange approaches to familiar tasks. The blog showcases her experiments in training neural networks on large textual datasets, in order to create names for guinea pigs, generate new college courses, and develop pie recipes. (Warning: do not eat the pies.) She’s also used image-generating networks for purposes such as substantially increasing the horror quotient of The Great British Bake-Off and generating cats which are worse than Cats.

Although we love weird images here on the Sea of Books, creating new ones is a little outside my comfort zone. However, we do have a lot of public-domain text lying around…

Applying neural networks to Victorian literature: because why not?

The projects that we’ve been working on at the UCD Centre for Cultural Analytics tend to generate a lot of data from historical literary materials, and for a while now I’ve been very keen to see what an AI would do with it. However, my coding abilities are limited, and although I was certain that the results would be hilarious, it seemed a bit frivolous to be bothering my more technically-minded collaborators with. I was delighted when I recently discovered that an extremely straightforward interface exists, which allows you to train the language model GPT-2 with your own data. If you, like me, happen to have access to a large number of Victorian novels and a fondness for algorithmically-generated humour, this ushers in a new era of possibilities!

Back in 2018, Max Woolf created a Google Colaboratory Notebook which allows you to retrain GPT-2 on any textual data that you like. (The sample data he provides does a pretty entertaining job of faking Shakespeare, if you just want to play around with that for starters!) I’d never used a notebook like this before, but Woolf’s step-by-step instructions are extremely straightforward, and I thought even an absolute beginner such as myself should be able to follow them. So, I jumped in at the deep end, and plugged in a list of novels that I’d compiled while working on some of the surviving catalogues of a massive 19th-century subscription library.

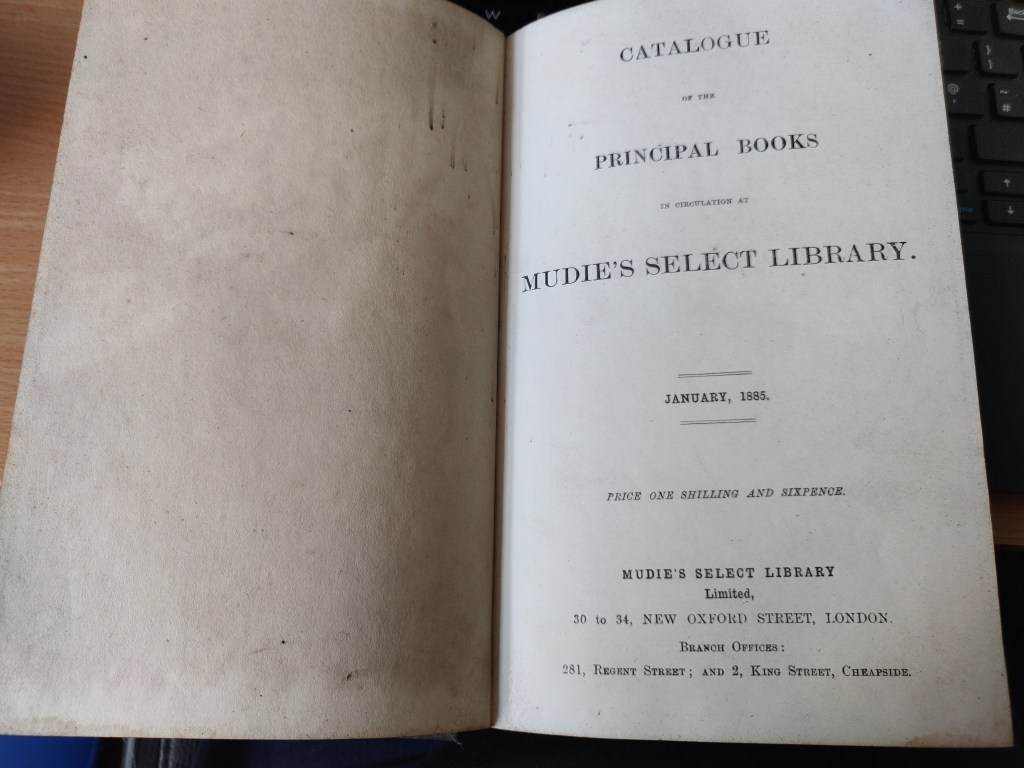

The data: Mudie’s Select Library

Mudie’s was in operation for almost a hundred years, and had thousands of subscribers, many of whom signed up for access to its primary attraction: the latest and most fashionable novels. As one of the main buyers of fiction published between the 1840s and 1890s – representing as much as 50% of the demand for many books – Mudie’s wielded what some commentators considered to be an excessive amount of influence over the mid-19th-century publishing industry in the UK and Ireland. A fair amount of scholarship has been done on Mudie’s in the past, but the catalogues have not been systematically analysed on a large scale, in part because they’re so enormous. I’m hoping to change that, so that we can better understand the market forces working on the literary landscape in 19th-century Britain, which partly determined what we now think of as the literary canon, and what we still read.

In the meantime, however, why not feed all this data to an obliging bot?

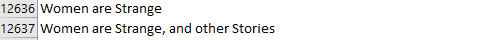

My catalogues were all published between the late 1840s and WWI, and when I’d collated all the fiction and stripped out the exact duplicates, I had a pleasingly symmetrical 17,171 titles. The vast majority of these books are more or less forgotten nowadays. (If you do feel the urge to read them, however, mass digitization projects such as Google Books and the Internet Archive have meant that most are easy to acquire as PDFs.)

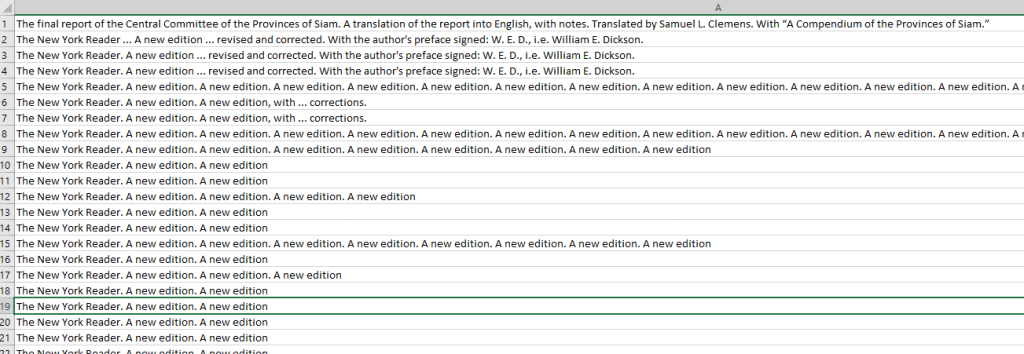

My data isn’t in perfect condition – there are some OCR and typographical errors (although not a huge quantity), and a few near-duplicates are still in there.

However, it’s not in terrible shape, and the neural network did a very impressive job of mimicking the language and format of the titles in order to come up with its own, new versions. In fact, it almost did too good of a job. For a lot of the results that it presented me with, I couldn’t tell whether I was looking at a real Mudie’s title or a robotically-authored one.

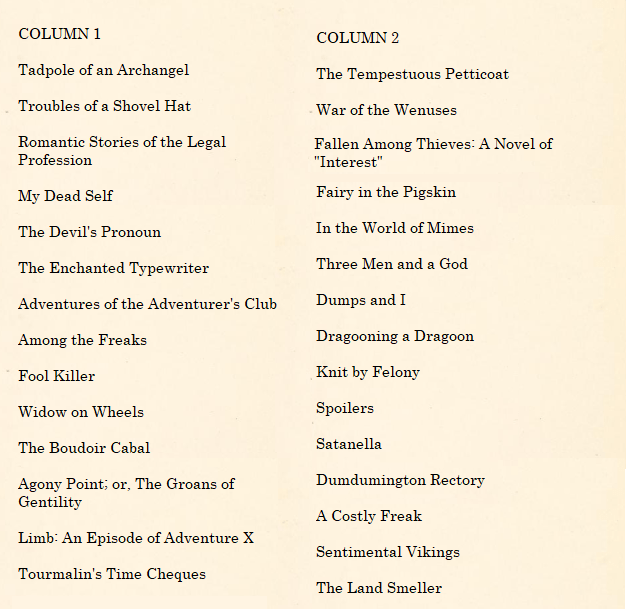

Pop quiz! Which of the columns below contains real 19th-century novels, and which contains ones that were generated by my neural network?

Actually, these are all perfectly genuine 19th and early 20th-century novel names, although some titles may have lost an initial “The” or “A” due to the cataloguer’s attempts to save space on the page. (Here’s The Tadpole of an Archangel, if you don’t believe me!)

Because of the AI’s propensity for just reproducing the training data – which seemed to gradually increase as I ran it more times – and because many of the titles are just so weird to start with, I had to do a lot of checking against the original list of works to see whether I was actually getting any new results. Turning up the “temperature” – i.e. telling the bot to get weirder – helped a bit, but the results stayed surprisingly coherent, even in terms of grammar.

In the end, however, I had a list of prospective novels which would have given any Victorian publisher pause for thought.

Say What?

For the most part, the bot did a good job of reproducing whole words and grammatical structures. Here are a few of the exceptions.

- They Like One Another a Heart

- MrsFrogs

- Was She Belonging?

- Dark O’ the Martyr

- Hair by the Coward

- I on the Verge

- Paso-PigPigs

- Crites and Scars

Why have one title, when you could have two?

It’s very common for 19th-century novels have a two-part title: for example, Middlemarch, A Story of Provincial Life, or Jane Eyre: An Autobiography. Mudie’s frequently stripped the second part out in order to save space in their catalogues, so these don’t appear too often in my training data. However, there were enough still present that GPT-2 thought it’d have a go at creating a few of its own.

- Sentimental Sir Walter’s Daughter; or Giddy Ox

- Frances, The Detective; or, Do Men Love?

- Denois Humayes; or Not by Addressing a Public Meeting

- The Sons of Getrude, A Primate; Or, The Education of Circumstance

- DUMBCOOMER; or the Next Generation

The Mudiebot also noticed that a few of my entries are still capitalized; this usually means that they appeared at the top of a physical page in the catalogue, and I haven’t yet got around to fixing it.

It couldn’t tell why these particular entries were capitalized, but it threw in a few capitals anyway, just to get into the swing of things. This presumably explains DUMBCOOMER.

Hang on, are we still in the 19th century?

A few examples showed evidence of anachronisms creeping in.

- Led Zeppelin; a Story of Quiet Life

- Cnthulhu

- Net Girls

- Weisburg, or the Third Reich

- Wei’s Daughter

- Ricroft of Chongqing

Perhaps unsurprisingly, the Third Reich, Cthulhu and Led Zeppelin do not feature in my training data (and yes, I checked just to make sure). My guess is that these are things that the language model knows about, and helpfully threw into the mix.

I was particularly intrigued by the Chinese-language elements; as well as Ricroft, the character “Wei” also appeared in Wei’s Blake and Wei’s Dearest Wish. Perhaps it’s a series?

I Can’t Believe It’s Not A Real 19th-Century Novel

Perhaps it’s because the book titles are so short and repetitive, but the most surprising part of the whole exercise was how convincing the majority of the Mudiebot titles were, at least when it wasn’t just reproducing my original data and hoping I wouldn’t notice. There were many titles – including, no doubt, some that I didn’t spot – which never actually existed, but were 100% believable to me, and I’ve spent more time looking at 19th-century library catalogues than is generally considered medically advisable.

- A Life in a Cavern

- Isabel’s Island

- Trotty’s Romance

- The Joys of Life

- The Pain of Life

- The Roses in the Wood

- Heritage of the Pendulum

- Tales of the Abbess of Blatchington

- Only A Rector

Where can I buy these ones, please

GPT-2 doesn’t really understand the somewhat gendered division of labour that most (although not all!) 19th-century novels conform to; it was quite happy presenting me with lady soldiers and admirals. I’m very much on board with this development!

Others were just generally intriguing.

- Four Horsewomen

- The Sherlocks at Church

- The Nineteenth Commandment

- Little Lady of the Depths

- St. Martin’s Cat

- Taco: Heroine & Villain

- Furious Harry

- Effie’s Gay Pride

- Cherry’s Traitors, 4th Series

- Phoebe, The Roman Soldier

I’m Not Entirely Sure What’s Going On Here

- Laughter of the Chickens

- Aesthetically, They are the Same

- The Belles and the Matchboxes

- The Crews of the Opera

- Poor Husband: A Tale

- Tongues of Rhetoric

- Japanese Jaggs

- The Impending Sword

- DOLTLANE

- Gummed Up, &c

And now for some more data: the British Library Labs Collection

Overall, GPT-2 did a very impressive job of coming up with new Victorian titles from the Mudie’s data. Frankly, I couldn’t tell the difference between the Mudiebot and your average aspiring Victorian novelist, assuming she or he was slightly drunk.

In order to see whether I could reproduce my results, I thought I’d train GPT-2- simple again, but this time on a more complex dataset.

Back in 2009, the British Library Labs created a public-domain dataset which consists of a very large number of digitized works, mostly from the 19th century. The metadata from this set provided me with just under fifty thousand unique book titles: as well as fiction, the collection contains travel writing, history, and a wide variety of other genres. These titles are more complex than those from Mudie’s: they are much longer (typically including the book title in its entirety, plus details about the edition and often the writer) and they contain a larger variety of words, having been published across a much longer time period – some date back to the 1700s. Additionally, many works are in languages other than English, including a few in Ancient Greek. How would the neural network fare under these trickier circumstances?

Things got off to a good start when I took it out for a test run, but it then got a bit fixated on a New York guidebook.

However, once I turned up its temperature, it got back to work and started generating new titles. It flirted briefly with the various language options in the dataset, but after a few iterations it wisely decided to stick with English.

Xrvztergesgeschichte und der Entwicklungsfeudenturfahrt, etc. mit den Königs Land und Freitern. Nebst einem egrüischen Karte. Zugleich von Prof. Dr. Z. A. Gross. (Eigengrüngs-Land und Landbildern und übersetzten Ungarn und eigenen Königs Ungarns seiner Gegenwart zu Rheinland und in und vergen und bearbeitet. Nach dem Rheinland und vaterländern in dem Rheinungsgeschichte. Erster und Verbindung. Neu in vergangenatur

This right here: not German.

Again, the neural network came up with quite a few suggestions that were so good I had to check to see whether they actually existed.

- The Journey of a Scottish Wolfhound. By the author of “Dramatic Poetry” J. Browning, etc Appendix

- The Land of the Snow Bears: being a sketch of a fifteen years’ farm life, etc

- The Sentimental Tenet of Ayrton Gown. A psychological romance. By the author of “Loyalty,” etc. Miss C. J. Roberts.

- Friendship’s Sufferings. A novel

- The Builder’s House, or, the Seven Fires of Babel. (Poems)

- The Doomed Mind. A novel

- Somebody’s Book of Poetry, with illustrations. Edited by J. Browning

- Polly-Polly; or, the Mysteries of the Old Manor

- Some things in this world. Stories by various authors

A few options sounded good in theory, but didn’t hold up on closer inspection.

- Golden Stories for Christmas: a collection of tales, songs, and offal … With an introduction and notes by W. Rae … Illustrated, etc. Eighty illustrations by H. Cardell … From the “Guardian.”

- Aunt Ann; or, the Woman who loved him

- A Letter of thanks to Almighty God, in the Tower of Babel and other verses, delivered on his birthday, 1789; as being the commencement of the present year, 1798

These ones are strangely compelling, if perhaps less likely to convince a commissioning editor:

- Recursion in a Country Park

- Logan’s Bungaloo. An Oudeck-a-Bilk’s and a grundwaag

- Doctor Peachy; or, the Stag Hole of Ireland

Interestingly, the BLLbot noticed that many of the titles of the real books in the training data make reference to their author’s other works. In a few cases, the neural network picked an existing work out of the real corpus and inserted it into a completely new title, in order to make it sound more like a book you might have heard of. This is something that plenty of Victorian publishers tried at one time or another!

Here’s one that was generated by the BLLbot:

The Trials of a Soldier. A tale. By the author of “The Epic of the Wrongs of Ireland” i.e. F. W. S. Maxwell … With illustrations, etc

“The Trials of a Soldier” sounds eminently plausible, but doesn’t exist, and neither does its author F. W. S. Maxwell. However, there is a real book in the British Library collection called The Wrongs of Ireland, historically reviewed from the Invasion to the present time.

Sure, it might just be taking different components and mashing them together, but on the whole it does quite a convincing job of it!

A few final thoughts

Despite a tendency towards repeating items from the training data (which I understand is somethat that AIs will typically tend to do, if they can get away with it), this neural network came up with a set of titles that were remarkably convincing. Overall, it did a better job (i.e. a more creative one, with fewer direct copies of the training data) when I gave it a bigger dataset to play with – perhaps not a surprising result! I’m particularly intrigued by the way it identified and reproduced some of the structures and conventions present in the original materials, such as referencing other works by the “author”.

A question remains: can GPT-2 write 19th-century novels in an equally convincing manner? Stay tuned to find out!

I am indeed staying tuned. Cor, what larks! Some really impressive titles generated here, along with genuine dross. Mind you, The Akond of Swot — what bot would have one believe that … oh.

LikeLike

Good point! I suppose writing book titles has always been an exercise in balancing “safe” against “thought-provoking”, and real-world examples fall both sides of the line!

LikeLiked by 1 person

Curious — I’ve just received notification of this, your reply, which you posted … in April?!

LikeLike

Then there are fashions in titles, aren’t there, and I expect it would be possible to expound mightily on that — but maybe you have! Must have a browse now…

LikeLike